How to Setup a Ghost Blog using Docker and DigitalOcean Spaces

This guide describes how you can setup an Ghost blog using Docker and DigitalOcean Spaces. Spaces will be used to store the images as well as being a CDN. This Ghost blog is running using the setup described below.

Requirements

- Get yourself a domain. You have to change to DigitalOcean's name servers. You can read more about how to change domain servers here

- Setup your VPS and install Docker and Docker Compose

- Setup DigitalOcean Spaces with your domain. You can read a setup guide for DigitalOcean Spaces here

Folder Structure

.dockerignore

.gitignore

docker-compose.yml

secrets.env

|-- ghost

|-- DockerfileGhost Dockerfile

The Dockerfile for the Ghost blog image lies inside the ghost folder. The Dockerfile contains the following:

FROM ghost:2.25.1

WORKDIR /var/lib/ghost

RUN npm install ghost-storage-adapter-s3

RUN cp -vr ./node_modules/ghost-storage-adapter-s3 ./current/core/server/adapters/storage/digitaloceanThe first line uses the Ghost Docker image version 2.25.1 as the base.

FROM ghost:2.25.1In the second line we change the working directory to /var/lib/ghost. This is because this the base location of the ghost installation of the Ghost Docker image.

WORKDIR /var/lib/ghostGhost blogs have support for custom storage adapters, or in other words we can use custom storage locations for our images. E.g Amazon S3, DigitalOcean spaces and so on. In this guide we will use DigitalOcean Spaces. We install a npm package called ghost-storage-adapter-s3. This package is actually used for Amazon S3. However, DigitalOcean Spaces also have support for the S3 syntax.

RUN npm install ghost-storage-adapter-s3Lastly, we have to copy the npm package into the folder for custom storage adapters which is located at current/core/server/adapters/storage/digitalocean. The last part digitalocean is a custom name for our storage adapter which can be changed, but you have to remember what your folder is called later.

RUN cp -vr ./node_modules/ghost-storage-adapter-s3 ./current/core/server/adapters/storage/digitaloceanDocker Compose

The complete docker-compose.yml file is shown below

version: '3.1'

services:

ghost:

build: ghost

restart: always

expose:

- 2368:2368

depends_on:

- db

env_file:

- secrets.env

volumes:

- ~/data/ghost:/var/lib/ghost/content

db:

image: mysql:5.7

restart: always

env_file:

- secrets.env

volumes:

- ~/data/mysql:/var/lib/mysql

networks:

default:

external:

name: nginx-proxyGhost

The first line in the docker-compose file we set to build the local Dockerfile in the ghost folder

build: ghostThen we set the image to always restart

restart: alwaysThe image will be exposed on port 2368

expose:

- 2368:2368The ghost image will depend on the db service, and the following line will make sure the db instance is started first

depends_on:

- dbThe ghost configuration files is located in the folder /var/lib/ghost/content. To save those files we have to expose the files through volumes. That will make the file persist through restarts of the images. We will make the ghost content files be available on the host in the folder ~/data/ghost.

volumes:

- ~/data/ghost:/var/lib/ghost/contentLastly, we add the secrets file. We don't want to have the secrets in the git repository so we add to the host file location. For this setup to work properly you need a specific set of variables in the secrets.env. You can read more about the setup in the secrets section.

env_file:

- secrets.envDatabase

The first line of the database service says that the MySQL 5.7 docker images should be used.

image: mysql:5.7The second line tells the image to always restart

restart: alwaysNext we add the secrets file

env_file:

- secrets.envLastly we add the MySQL folder to volumes to make the data to persist through restarts of the image

volumes:

- ~/data/mysql:/var/lib/mysqlSecrets

For the setup to work you need the following variables in the secrets.env file

| Variabel name | Value |

|---|---|

| database__client | mysql |

| database__connection__host | db |

| database__connection__user | database_username |

| database__connection__password | database_password |

| database__connection__database | ghost |

| MYSQL__ROOT__PASSWORD | database_password |

| storage__active | digitalocean |

| AWS_ACCESS_KEY_ID | spaces_access_key |

| AWS_SECRET_ACCESS_KEY | spaces_access_secret |

| AWS_DEFAULT_REGION | your_spaces_region_location |

| GHOST_STORAGE_ADAPTER_S3_PATH_BUCKET | |

| GHOST_STORAGE_ADAPTER_S3_ASSET_HOST | |

| GHOST_STORAGE_ADAPTER_S3_ENDPOINT | |

| GHOST_STORAGE_ADAPTER_S3_ACL | public-read |

The database__connection variables is used by the ghost instance to connect to the database. These variables are self explanatory. You should change these variables to something different from the list above.

MYSQL__ROOT__PASSWORD is used by the mysql image. The variable is used to set the root password for the database. This variable has to be the same as database__connection__password

storage__active is the name of the custom storage adapter from earlier. In this case the folder name of the custom storage adapter is digitalocean

The AWS variables is used by the custom NodeJS storage adapter. AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY you find in Digitaloceans developer console under the API tab. Click on Generate New Key to generate a new pair of access key and secret keys. The key in DigitalOcean equals AWS_ACCESS_KEY_ID and the secret equals AWS_SECRET_ACCESS_KEY. Remember to note the secret as it will not be visible later.

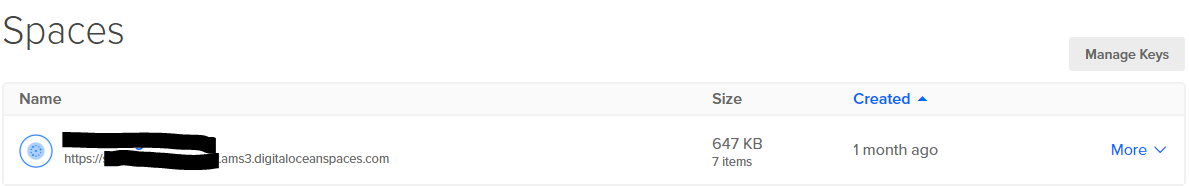

AWS_DEFAULT_REGION of the space you can find by clicking on the spaces tab. In the spaces list your space will have a link below the name. By looking at you see the region name. In the image below you can see that the AWS_DEFAULT_REGION value should be ams3.

The GHOST_STORAGE_ADAPTER variables is used by the ghost Docker image to upload static files to your spaces location.

GHOST_STORAGE_ADAPTER_S3_PATH_BUCKETis supposed to be the name of your spaces. If you look at the image above the name will be the text infront of the region in the url.GHOST_STORAGE_ADAPTER_S3_ASSET_HOSTis the CDN url for your space. In my case that is https://static.devguides.dev/images. As you can see I created a folder called images where I want ghost to upload the images to.GHOST_STORAGE_ADAPTER_S3_ENDPOINTin my case the endpoint isams3.digitaloceanspaces.com/images. Again notice that I add the/images.GHOST_STORAGE_ADAPTER_S3_ACLshould be set topublic-read. This makes all images you upload available for everyone to read.

When everything is setup all you need to do is to run the command docker-compose up -d inside the root folder to run the ghost blog. When docker is finished dowloading, the site will be available on the url localhost:2368

After following this guide you should have a functioning Ghost blog with HTTPS and DigitalOcean Spaces as the CDN. If you want to try, a small $5 DigitalOcean droplet should be enough to run the example described above. At the time of writing this blog is running on a $5 droplet.

Do you want to support this blog? Sign up to DigitalOcean using the following referral link: https://m.do.co/c/a51c1128b7df. If you use the link you get $50 credit over 30 days on DigitalOcean.